Despite limitations, “MarioVGG” makers think AI video could one day replace game engines.

Last month, Google’s GameNGen AI model showed that generalized image diffusion techniques can be used to generate a passable, playable version of Doom. Now, researchers are using some similar techniques with a model called MarioVGG to see if an AI model can generate plausible video of Super Mario Bros. in response to user inputs.

The results of the MarioVGG model—available as a pre-print paper published by the crypto-adjacent AI company Virtuals Protocol—still display a lot of apparent glitches, and it’s too slow for anything approaching real-time gameplay at the moment. But the results show how even a limited model can infer some impressive physics and gameplay dynamics just from studying a bit of video and input data.

The researchers hope this represents a first step toward “producing and demonstrating a reliable and controllable video game generator,” or possibly even “replacing game development and game engines completely using video generation models” in the future.

Watching 737,000 frames of Mario

To train their model, the MarioVGG researchers (GitHub users erniechew and Brian Lim are listed as contributors) started with a public data set of Super Mario Bros. gameplay containing 280 “levels'” worth of input and image data arranged for machine-learning purposes (level 1-1 was removed from the training data so images from it could be used in the evaluation). The more than 737,000 individual frames in that data set were “preprocessed” into 35 frame chunks so the model could start to learn what the immediate results of various inputs generally looked like.

Ars Video

How Lighting Design In The Callisto Protocol Elevates The Horror

To “simplify the gameplay situation,” the researchers decided to focus only on two potential inputs in the data set: “run right” and “run right and jump.” Even this limited movement set presented some difficulties for the machine-learning system, though, since the preprocessor had to look backward for a few frames before a jump to figure out if and when the “run” started. Any jumps that included mid-air adjustments (i.e., the “left” button) also had to be thrown out because “this would introduce noise to the training dataset,” the researchers write.

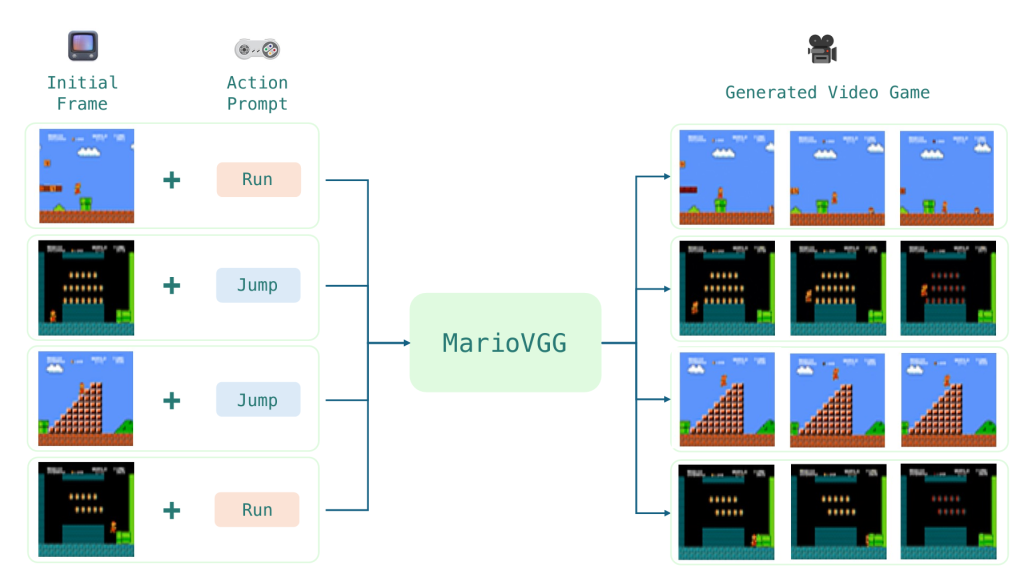

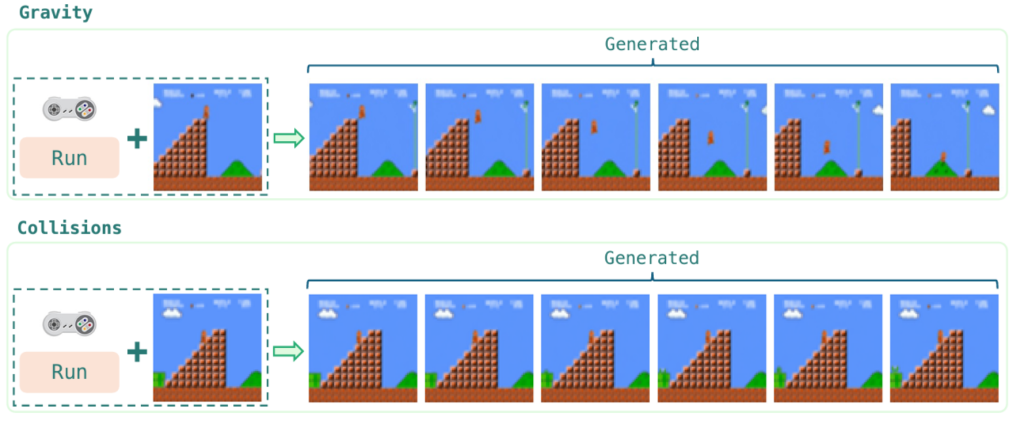

- MarioVGG takes a single gameplay frame and a text input action to generate multiple video frames. MarioVGG

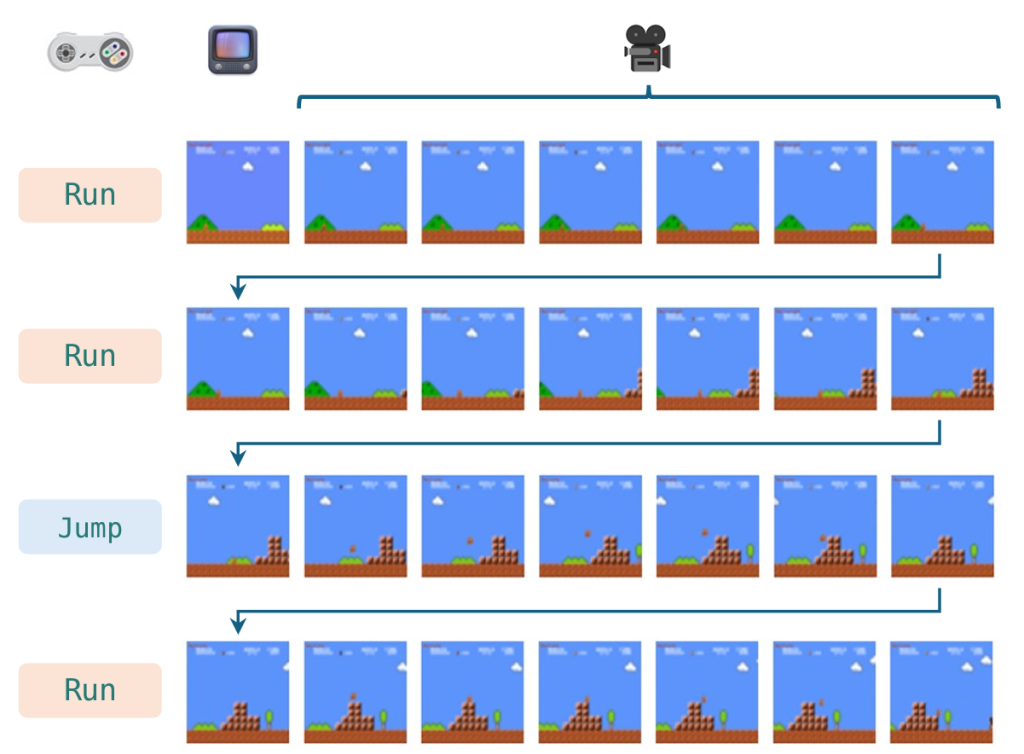

- The last frame of a generated video sequence can be used as the baseline for the next set of frames in the video. MarioVGG

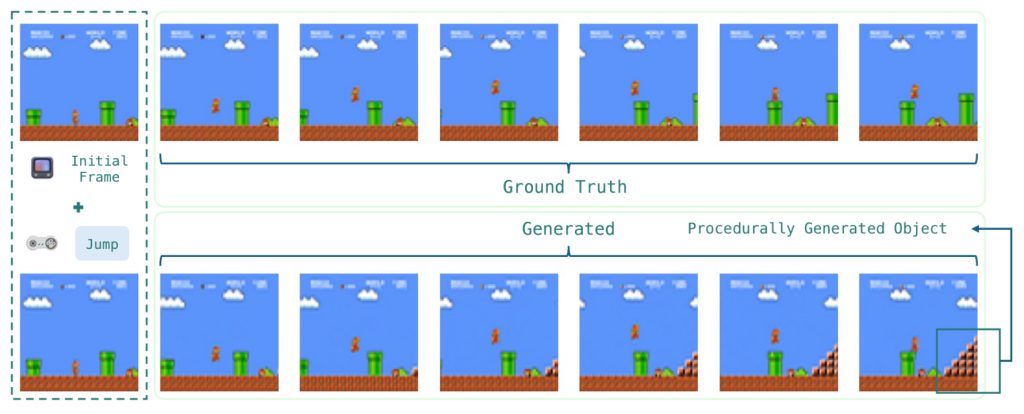

- The AI-generated arc of Mario’s jump is pretty accurate (even as the algorithm creates random obstacles as the screen “scrolls”). MarioVGG

- MarioVGG was able to infer the physics of behaviors like running off a ledge or running into obstacles. MarioVGG

- A particularly bad example of a glitch that causes Mario to simply disappear from the scene at points. MarioVGG

After preprocessing (and about 48 hours of training on a single RTX 4090 graphics card), the researchers used a standard convolution and denoising process to generate new frames of video from a static starting game image and a text input (either “run” or “jump” in this limited case). While these generated sequences only last for a few frames, the last frame of one sequence can be used as the first of a new sequence, feasibly creating gameplay videos of any length that still show “coherent and consistent gameplay,” according to the researchers.

Super Mario 0.5

Even with all this setup, MarioVGG isn’t exactly generating silky smooth video that’s indistinguishable from a real NES game. For efficiency, the researchers downscale the output frames from the NES’ 256×240 resolution to a much muddier 64×48. They also condense 35 frames’ worth of video time into just seven generated frames that are distributed “at uniform intervals,” creating “gameplay” video that’s much rougher-looking than the real game output.

Despite those limitations, the MarioVGG model still struggles to even approach real-time video generation, at this point. The single RTX 4090 used by the researchers took six whole seconds to generate a six-frame video sequence, representing just over half a second of video, even at an extremely limited frame rate. The researchers admit this is “not practical and friendly for interactive video games” but hope that future optimizations in weight quantization (and perhaps use of more computing resources) could improve this rate.

Further Reading

Google’s Genie game maker is what happens when AI watches 30K hrs of video games

With those limits in mind, though, MarioVGG can create some passably believable video of Mario running and jumping from a static starting image, akin to Google’s Genie game maker. The model was even able to “learn the physics of the game purely from video frames in the training data without any explicit hard-coded rules,” the researchers write. This includes inferring behaviors like Mario falling when he runs off the edge of a cliff (with believable gravity) and (usually) halting Mario’s forward motion when he’s adjacent to an obstacle, the researchers write.

- Some lengthier MarioVGG-generated sequences, which eventually start to show some implausible results. MarioVGG

- Neither enemies nor walls seem able to stop Mario in some of these MarioVGG examples. MarioVGG

- Mario falling through a bridge and turning into a Cheep-Cheep (right-most example) is never going to not be funny. MarioVGG

And while MarioVGG was focused on simulating Mario’s movements, the researchers found that the system could effectively hallucinate new obstacles for Mario as the video scrolls through an imagined level. These obstacles “are coherent with the graphical language of the game,” the researchers write, but can’t currently be influenced by user prompts (e.g., put a pit in front of Mario and make him jump over it).

Just make it up

Like all probabilistic AI models, though, MarioVGG has a frustrating tendency to sometimes give completely unuseful results. Sometimes that means just ignoring user input prompts (“we observe that the input action text is not obeyed all the time,” the researchers write). Other times, it means hallucinating obvious visual glitches: Mario sometimes lands inside obstacles, runs through obstacles and enemies, flashes different colors, shrinks/grows from frame to frame, or disappears completely for multiple frames before reappearing.

One particularly absurd video shared by the researchers shows Mario falling through the bridge, becoming a Cheep-Cheep, then flying back up through the bridges and transforming into Mario again. That’s the kind of thing we’d expect to see from a Wonder Flower, not an AI video of the original Super Mario Bros.

The researchers surmise that training for longer on “more diverse gameplay data” could help with these significant problems and help their model simulate more than just running and jumping inexorably to the right. Still, MarioVGG stands as a fun proof-of-concept that shows even limited training data and algorithms can create some decent starting models of basic games.