Apple debuts new catchall AI branding, generative features during WWDC 2024 keynote.

On Monday, Apple debuted “Apple Intelligence,” a new suite of free AI-powered features for iOS 18, iPadOS 18, macOS Sequoia that includes creating email summaries, generating images and emoji, and allowing Siri to take actions on your behalf. These features are achieved through a combination of on-device and cloud processing, with a strong emphasis on privacy. Apple says that Apple Intelligence features will be widely available later this year and will be available as a beta test for developers this summer.

FURTHER READING

Apple releases eight small AI language models aimed at on-device use

The announcements came during a livestream WWDC keynote and a simultaneous event attended by the press on Apple’s campus in Cupertino, California. In an introduction, Apple CEO Tim Cook said the company has been using machine learning for years, but the introduction of large language models (LLMs) presents new opportunities to elevate the capabilities of Apple products. He emphasized the need for both personalization and privacy in Apple’s approach.

At last year’s WWDC, Apple avoided using the term “AI” completely, instead preferring terms like “machine learning” as Apple’s way of avoiding buzzy hype while integrating applications of AI into apps in useful ways. This year, Apple figured out a new way to largely avoid the abbreviation “AI” by coining “Apple Intelligence,” a catchall branding term that refers to a broad group of machine learning, LLM, and image generation technologies. By our count, the term “AI” was used sparingly in the keynote—most notably near the end of the presentation when Apple executive Craig Federighi said, “It’s AI for the rest of us.”

The Apple Intelligence umbrella includes a range of features that require an iPhone 15 Pro, iPhone 15 Pro Max, iPad with M1 or later, or Mac with M1 or later. Devices also must have Siri enabled and set to US English. The features include notification prioritization to minimize distractions, writing tools that can summarize text, change tone, or suggest edits, and the ability to generate personalized images for contacts. The system, through Siri, can also carry out tasks on the user’s behalf, such as retrieving files shared by a specific person or playing a podcast sent by a family member.Advertisement

Siri’s new brain

Apple says that privacy is a key priority in the implementation of Apple Intelligence. For some AI features, on-device processing means that personal data is not transmitted or processed in data centers. For complex requests that can’t run locally on a pocket-sized LLM, Apple has developed “Private Cloud Compute,” which sends only relevant data to servers without retaining it. Apple claims this process is transparent and that experts can verify the server code to ensure privacy.

Under Apple Intelligence, Siri gets a big boost with a new logo and on-screen design, a new ability to understand more nuanced requests, and the ability to answer questions about the system state or take actions on the user’s behalf.

Users can communicate with Siri using either voice or text input, and Siri can maintain context between requests.

The redesigned Siri also reportedly demonstrates onscreen awareness, allowing it to perform actions related to information displayed on the screen, such as adding an address from a Messages conversation to a contact card. Apple says the new Siri can execute hundreds of new actions across both Apple and third-party apps, such as finding book recommendations sent by a friend in Messages or Mail, or sending specific photos to a contact mentioned in a request.

ARS VIDEO

How The Callisto Protocol’s Gameplay Was Perfected Months Before Release

Apple Intelligence widely integrated

Apple Intelligence features have also been integrated into various places, including the Mail app, enabling one-tap summaries of long emails and prioritizing emails based on importance. In the Notes and Phone apps, users can record and transcribe audio, then get an AI-generated summary of the text. And there’s a new Focus mode called “Reduce Interruptions” that only shows prioritized notifications that might need immediate attention. Untangling all the ways Apple has baked these features into their OSes, how they work, and if they have any privacy implications will take some time.

On a more entertaining front, Apple also debuted a new feature called Genmoji, which allows users to create personalized emoji by typing a description. The system generates a custom Genmoji based on the user’s input, along with additional options. Alternatively, users can create Genmoji representations of friends and family members using their photos. Apple says that generated Genmoji are seamlessly integrated into messages and can be shared as stickers or used as reactions in Tapbacks, similar to traditional emoji.

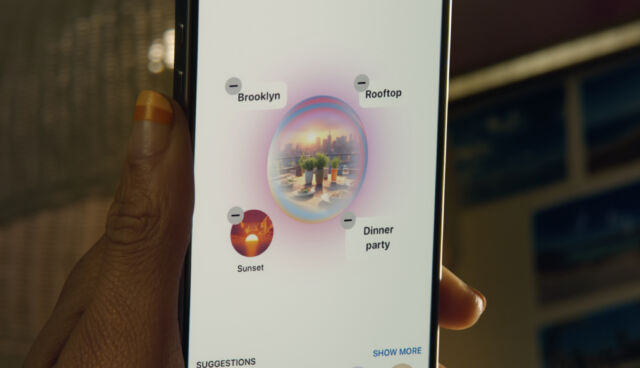

It also debuted Image Playground—an AI image synthesis app powered by an as-yet-unknown AI model—for generating images based on written prompts. The feature allows image creation using three styles: Animation, Illustration, or Sketch. Image Playground is integrated into apps like Messages and is also available as a standalone app. Users can select from various concepts across different categories, input descriptions to define images, include individuals from their personal photo library, and choose their desired style. When used within Messages, Image Playground suggests concepts related to the current conversation, aiming to make image creation more relevant.Advertisement

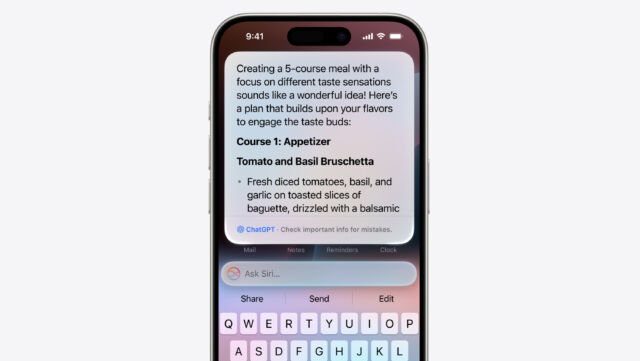

Near the end of the WWDC keynote, Apple proved that the longstanding rumors of a deal between OpenAI and Apple were true by announcing a new form of ChatGPT integration into iOS, which we have covered in a separate piece. It functions similarly to a Siri search but gives users the option of whether the query is sent to OpenAI or not.

It’s worth noting that Apple says that they will add “support for other AI models in the future” to this kind of chatbot integration. Since we know of talks between Google and Apple as well, integrated Gemini AI support may be incoming. It’s also worth mentioning that Apple’s new AI features have only just been announced and are not yet available for us or the public to evaluate, so they may change over time as Apple gets closer to release.